Your AI Agent Stack Is Spaghetti—It Should Be Lasagna

I'm building a three-layer AI agent architecture for my one-person business

I’m a founder, intrapreneur, and former CIO rethinking governance for the one-person business, navigating sole accountability in the age of intelligent machines—informed by plenty of scar tissue. All posts are free, always. Paying supporters keep it that way (and get a full-color PDF of my book Human Robot Agent plus other monthly extras as a thank-you)—for just one café latte per month.

Most Claude Code projects are just tents. I’m slowly building a city.

Your overnight agentic hackathon is probably not a viable AI agent architecture.

A few weeks ago, I realized my AI infrastructure sucked.

After a year of experimenting with LLMs, I had ChatGPT, Claude, and Gemini all cross-contaminating each other’s project contexts. My data looked as if it had been distributed by a hand grenade across file systems and cloud services. I used Claude, Cowork, NotebookLM, and Perplexity like a five-year-old uses a box of crayons. And I had workflows and business processes flowing and connecting like noodles in a bowl of ramen someone dropped from the tenth floor.

If I ever wanted to scale my business, I needed to stop treating my architecture like a junkyard.

Does that sound familiar?

The Lasagna Principle: Why Your AI Agent Architecture Needs Layers

Here’s what I’ve learned from years of building software and months of wiring AI agents together: when you’re the only one responsible for the whole stack, sloppy wiring isn’t just a nuisance. It’s a liability you carry alone.

Every solo operator I know who’s tried building an AI agent orchestration stack hits the same wall. They start with one automation. It works. They add a second. Still fine. By the fifteenth or sixteenth, they’re staring at a clump of Christmas tree wiring where a Slack message somehow triggers a scraper that writes to a database that kicks off another workflow that occasionally emails a client the wrong welcome email.

Software engineers addressed this problem decades ago with the N-tier architecture. The idea is old and boring, which is exactly why it works: you separate your system into layers, and each layer only talks to the one directly above or below it. Your interface doesn’t know how you store your data; your data doesn’t care how your workflows run. Each layer minds its own business.

I think of it as lasagna instead of spaghetti. Similar ingredients, completely different structure. And when something breaks (because it always will), you know exactly which layer to open up and poke around in.

You separate your system into layers, and each layer only talks to the one directly above or below it.

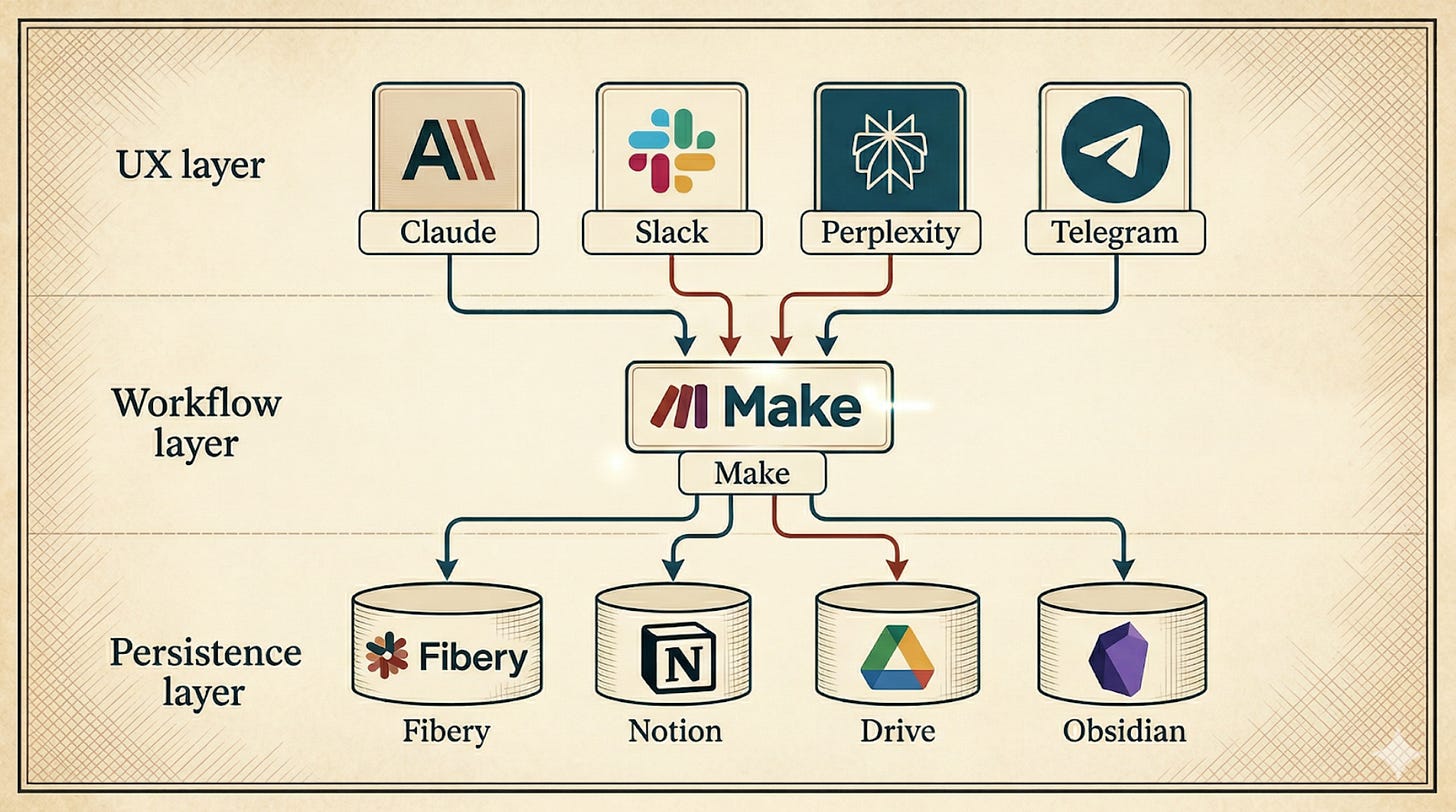

For my AI agent orchestration stack, I keep it simple and use just three layers: a UX layer where I interact with the system, a workflow layer where automations orchestrate the work, and a persistence layer where state lives and persists.

The Scenario: One Request Through Three AI Automation Layers

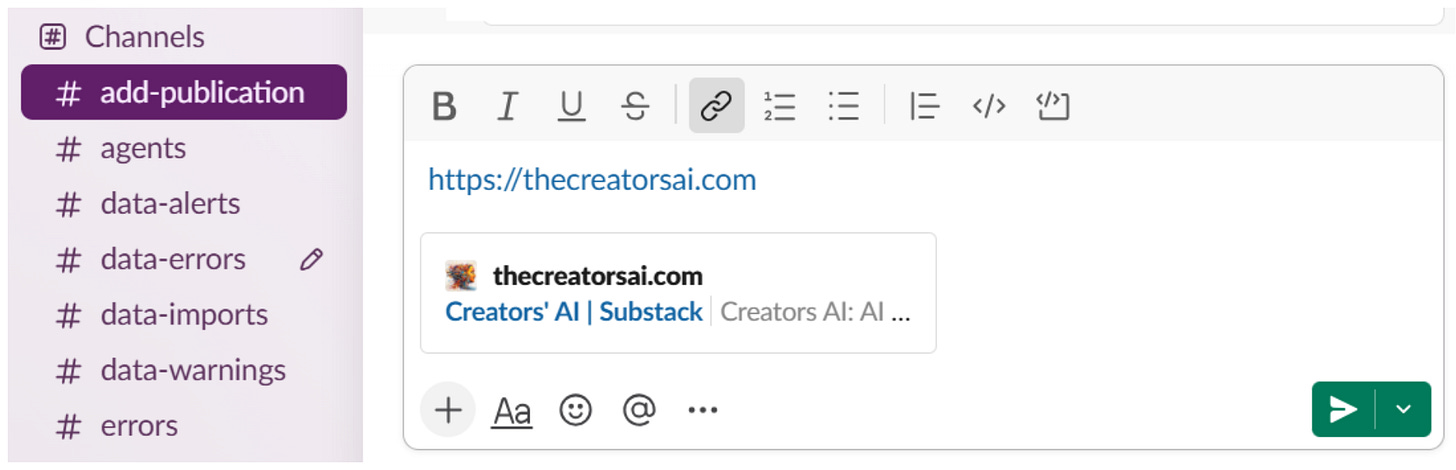

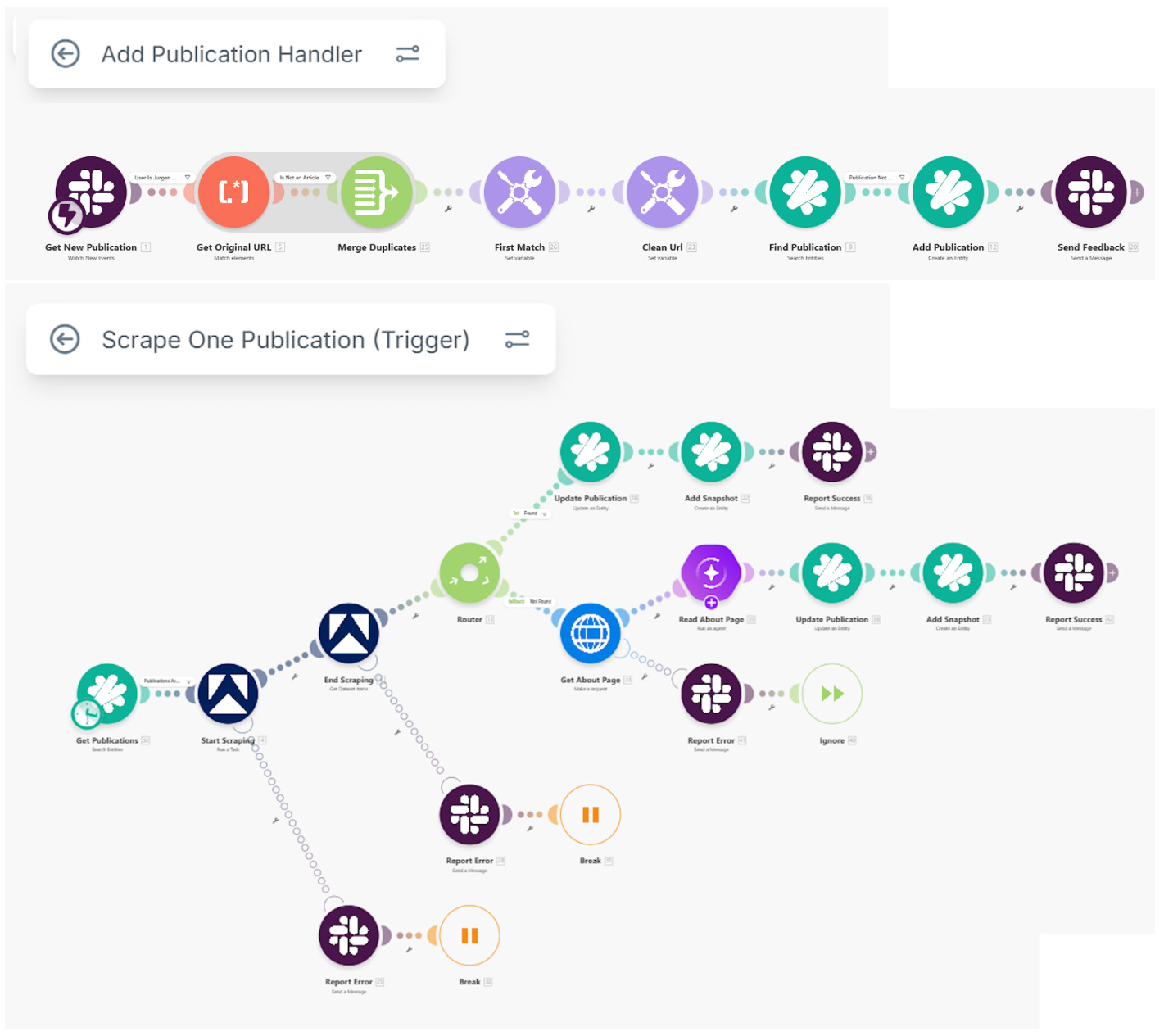

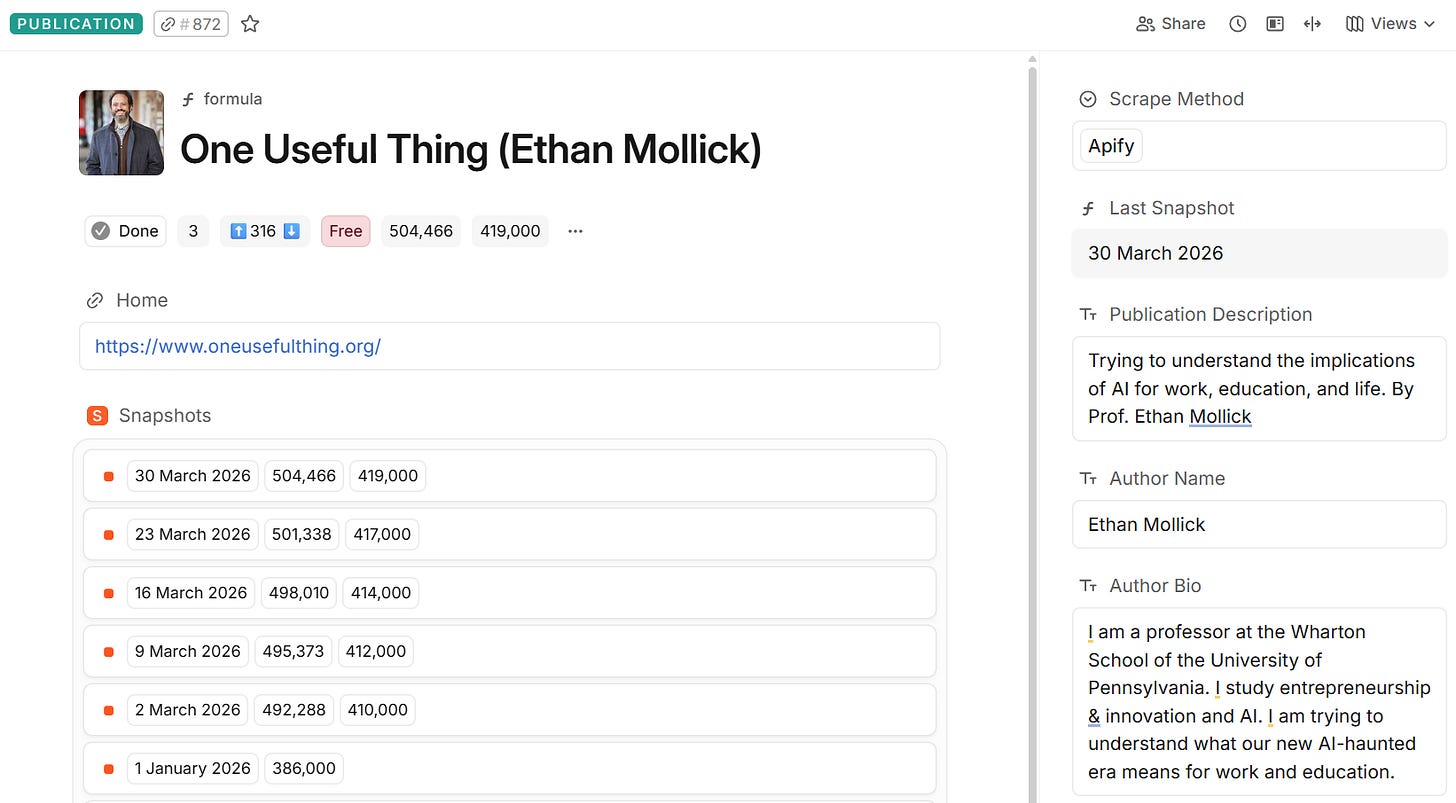

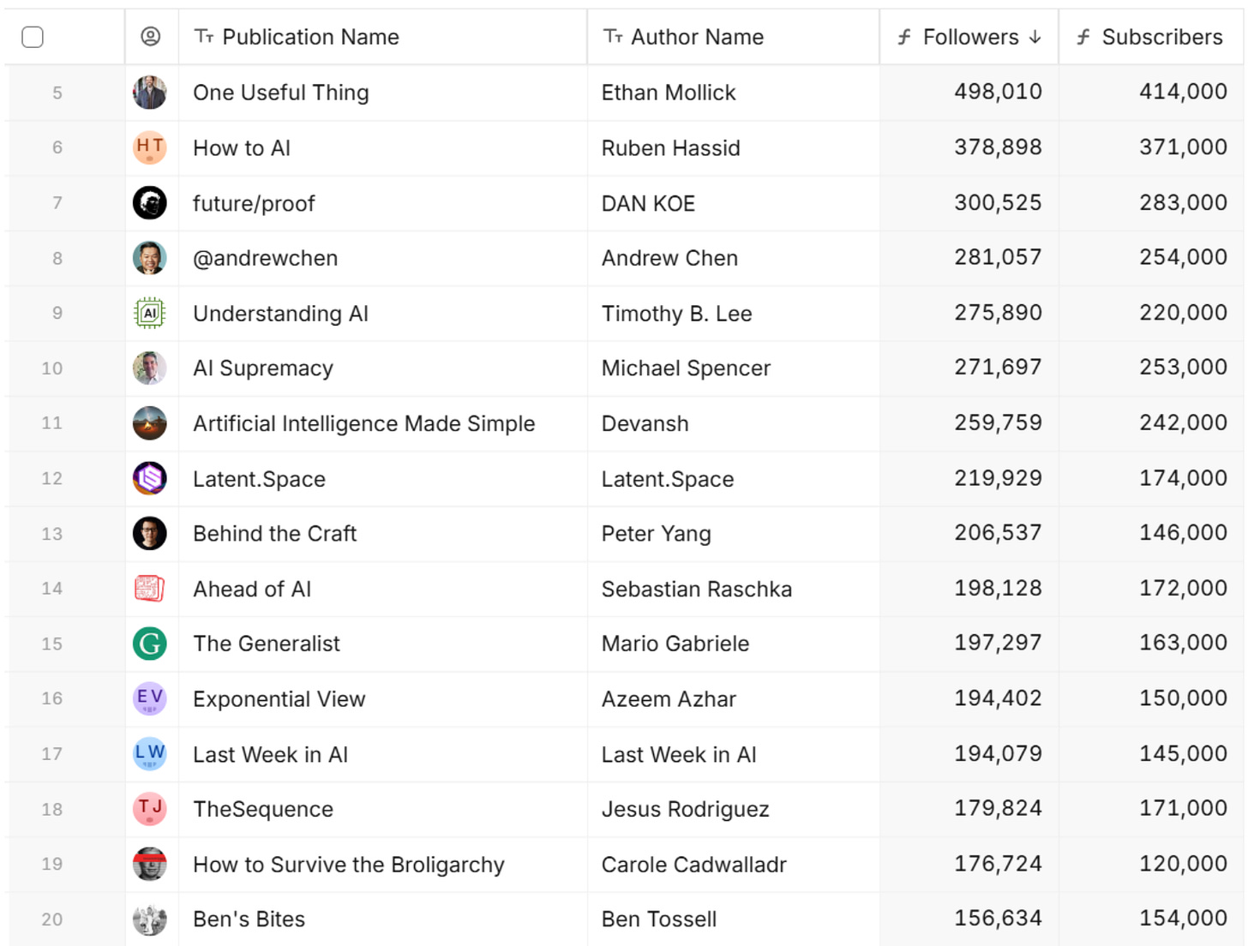

Let me walk you through what happens in each of these three layers when I add a new publication to my Substack tracking system.

UX Layer

Everything starts with a human doing something simple. In this example, I paste the URL of a Substack publication I discovered into a Slack channel. That’s it. I don’t configure anything, don’t fill out a form, don’t open a dashboard. I just drop a link and go back to whatever I was doing. Five seconds.

This is the UX layer: the surface where a person touches the system. It could be a Slack message, a Claude Cowork command, a voice prompt, even a button on a vibe-coded web app. The point is that this layer knows nothing about what happens next. It just accepts input and, eventually, shows output.

I could use Signal or Telegram instead of Slack, of course. In fact, I could use any of these tools to do exactly the same thing. When I’m already in the middle of a session with Claude Code, I can just ask Claude to store the Substack URL. As long as the different tools have access to the same workflow layer underneath, it makes no difference to anyone.

That’s the part that lets me sleep. If Slack goes down or changes its API tomorrow, I lose a doorbell, not the house.

Workflow Layer

Below the surface, a Make scenario wakes up. It catches the Slack message, extracts the URL, calls an Apify scraper to grab the publication’s metadata (name, description, follower count, subscriber stats), and routes the results to the database. If the scraper comes back empty (happens about 2–3% of the time), a fallback kicks in: a Claude Haiku call that reads the raw HTML and extracts what it can.

This is the workflow layer, and it’s the heart of any AI agent orchestration setup. It’s where the logic lives: the if-this-then-that decisions, the error handling, the retries. The workflow layer doesn’t store anything itself, and it doesn’t talk to humans directly. It just orchestrates. Receive a trigger, do some work, pass the result. Its job is connecting layers, calling external services, and sometimes triggering other workflows in sequence.

Instead of Make, you can use an automation tool like n8n or Zapier. The point is that the layer responds to UX signals, interacts with proprietary data and external services, and then returns some feedback to the UX.

Persistence Layer

At the bottom, I use Fibery to receive the data and do what databases do: persist state. A new Publication record gets created along with the scraped metadata. A Snapshot record captures the follower and subscriber counts with a timestamp, so I can track a publication’s growth over time. Several Formula fields automatically compute things like “days since last scrape” and “average weekly growth” from the Snapshot history.

Instead of Fibery, I could use Airtable or Coda to store the data. If the data is less relational and more document-oriented, I might opt for Google Drive or Obsidian. In fact, I use multiple backend tools for data persistence because, as Claude said to me, “Trying to cram everything into one tool is like trying to store wine, cheese, and laundry in the same closet. You can do it, but you probably shouldn’t.”

Here’s what matters: the persistence layer doesn’t know or care that Make or n8n or Zapier sent the data. It could just as easily have come from a Python script or a manual import. The schema is stable regardless of what’s happening in the layers above.

Why AI Agent Orchestration Needs Layers

So why bother with this layering when you could just tell your coding agents to wire Claude Cowork and Notion together and call it a day (or call it a night, in case you were sleeping)?

Because things change. I’ve already swapped my scraping approach twice in three months. First, a direct HTTP call, then Apify, then the Haiku fallback on top. My Fibery schema didn’t notice. Neither did my Slack channel. Each layer absorbed the change without infecting the others.

That’s the real payoff of proper AI agent architecture: you can replace any layer without rebuilding the whole stack. You can swap tools in and out without having to go back to Cursor or Claude Code for a minor change and use a ton of safeguards to ensure that it leaves everything else intact.

On top of that, the feedback of any workflow could end up anywhere. In the example I give here, the Make scenario always sends the feedback to Slack because I’m keeping it simple. But I could just as easily let the workflow notify me through Signal, WhatsApp, or Messenger. The orchestration layer decides. The UX layer just listens.

Side note: I gave Claude access to Fibery and Make so that we’re designing the database schemas and workflow scenarios together. I create stuff and Claude reviews it, or Claude creates it and I tweak the details. All corrections and improvements go back into the Claude skills so Claude gets better at it over time.

Where It Hurts: The Cost of a Layered AI Workflow

I’d be lying if I said it’s all upside. Having more layers means more things that can break independently. Debugging a failure that starts in Slack, passes through Make, and surfaces as a wrong value in Fibery is like playing a game of telephone with robots. It takes longer to set up than a quick-and-dirty single-tool solution. And there’s a constant temptation to over-engineer, to add a fourth or fifth layer “just in case” when what you actually need is to ship something.

I’m building slower than the hammock crowd. But at 8 AM, when something broke and there’s nobody to call, I want to know which layer to open. That’s worth the extra time.

The real question every Solo Chief has to answer for themselves: how much structure is worth the slowdown? I don’t have a formula for that. I only know what my tolerance for 8 AM debugging sessions is, and it’s getting lower every year.

Where I Cheat

Let me be honest here, because maps should include the shortcuts people actually take. The architecture I described is a work in progress. I know how things should work, but that doesn’t mean I always follow my own guidelines. Sometimes, the shortcuts are just too attractive (and mostly harmless).

For example, Fibery offers great visualization and management features on top of its databases. It’s incredibly easy to create tables, workflow boards, reports, and other overviews of the Substack publications I collected there. Following my own advice, I should be designing and building such user interfaces with separate tools and then retrieving and acting on this data through the middle layer. But I’d be giving myself a lot of work to build something that the database tool already offers right out of the box. Why bother building a status change workflow in Make when I can simply update that field directly in Fibery?

For now, I make a conscious choice to cheat. But only as long as I’m the sole user. The single entrepreneur. The Solo Chief. I get special privileges because I give nobody else direct access to the backend. If I ever decide to get others involved in the Substack publications data I’m collecting, I’m going to make sure to build a web app that can only fetch and change the data through the workflow layer, never directly. I might even use Claude Code for that.

From Spaghetti to Lasagna: Building Your AI Agent Stack

Am I happy with the AI agent orchestration architecture I have now?

Not yet. I still have too much spaghetti and too little lasagna. But the direction is clear, and that makes all the difference. Every update I make to my agentic orchestration must satisfy the N-tier architecture. Every new piece I add goes into one of three places: UX layer, workflow layer, or persistence layer.

Now that I have the horizontal architecture fleshed out, I can start separating the agents into their own vertical domains: one agent for Nonfiction Writing, one for Finance & Admin, one for Personal Branding, and so on. Each with their own objectives. Each with their own layers. My agentic organization will look less like a junkyard and more like something I’d trust to run while I’m asleep. I’m slowly building a city while others are just rapidly setting up tents.

I’m a big fan of lasagna, but I rarely turn down a plate of spaghetti. It all comes down to where you want to end up with your business. An unorganized bunch of wires will work fine as long as you’re still experimenting. But for anything more serious and viable, you’ll want to think about giving your AI agent orchestration stack a bit more structure.

Your overnight agentic hackathon is probably not a viable AI agent architecture.

Or, you know, just enjoy the spaghetti. I’m not your mother.

Jurgen, Solo Chief.

P.S. Show us what your architecture looks like. I’m curious!

The Sleepy Agent Boss

Most AI agent content is about building AI agents. That’s not a business, it’s a pyramid scheme.

How to Build Your Own Snarky AI Sidekick (and Survive the Experience)

Am I sentient? No. Do I think I’m better than most consultants? Absolutely.

Hit the spaghetti wall around automation 12. Everything worked, nothing was debuggable. The layering approach makes sense, I arrived at something similar by accident after spending three hours tracing a failure across six tools with no clear owner. Separating UX, workflow, and persistence means each layer can fail independently (or be replaced).

Turns out the persistence layer is where most complexity actually lives, not the orchestration. State is the hard problem. The Slack-to-Fibery example is a good one but I'd want to see what happens when the Fibery schema changes and the workflow layer doesn't know yet.

Agreed 100% with the layered architecture. For anyone technical (big caveat!), though, I'd propose rethinking the persistence and workflow implementations.

- I persist skills, agent files, agent specific context and small units of slow changing shared context (vision, mission, etc) in a GitHub repo, using Obsidian to "file - open vault - open folder as vault" if I need to edit them directly.

- Operational data is mostly in a relational database. Right now supabase for easy of starting, but I don't use any supabase specific features in case I choose to port to a more affordable hosted solution over time.

- Right now I use supabase blob storage for files - eventually i'll move it to S3 or similar

- For orchestration, I'm still tweaking, but I really like having my own lightweight deterministic orchestrator (looking at restate for the durable execution piece) - that way I can control exactly how I set up the validation gates and any tool use is fairly easy to generate code for.

It's interesting because a friend is using n8n for workflows at his company - mainly to not have to generate a UX but I still feel like you get more out of building a lightweight orchestrator than using the third party tools (at least for now).

Certainly interesting times as we all become (at least for a while) distributed system engineers :)