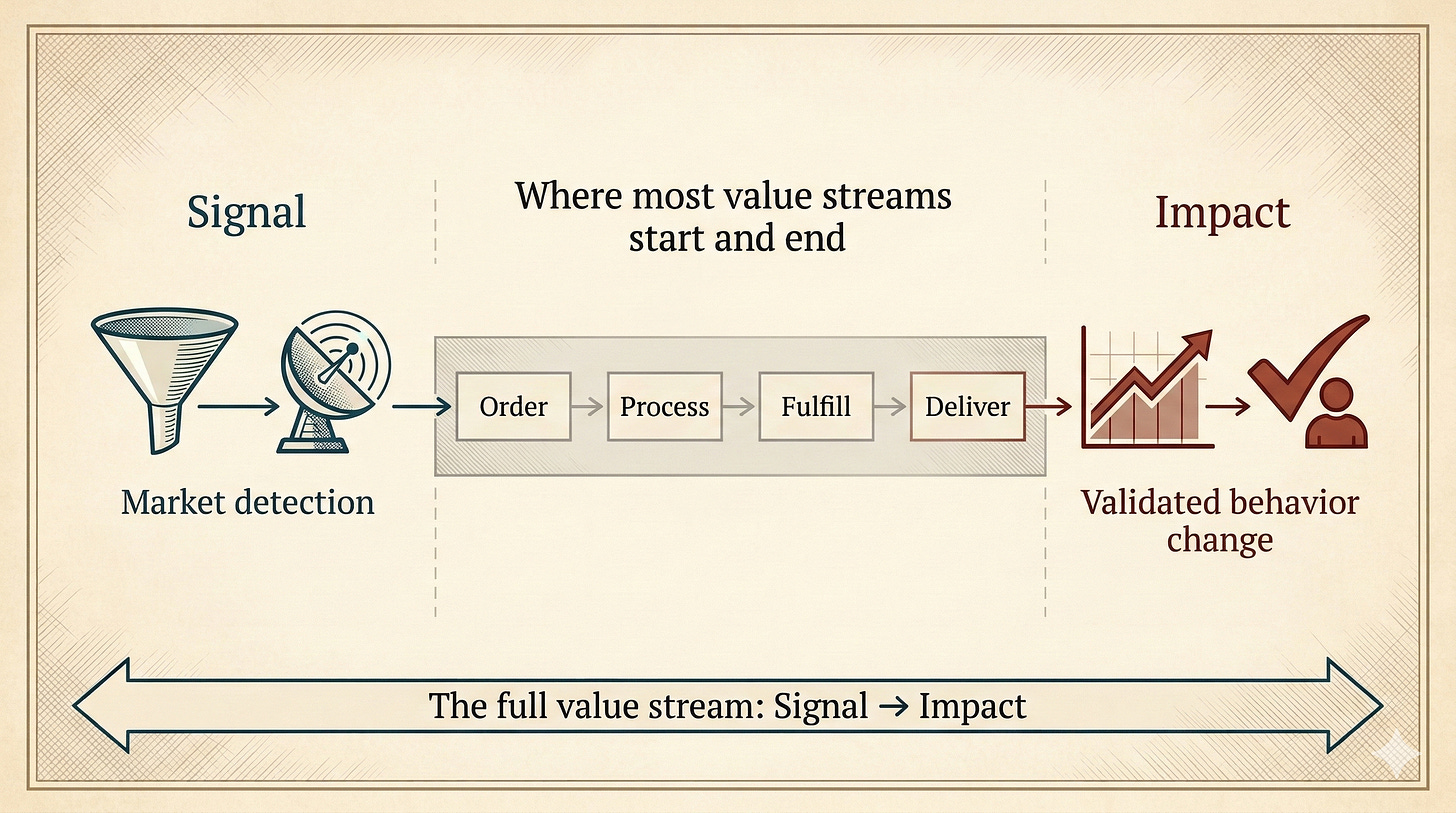

Value Stream Examples—From Signal to Impact

Why Almost Every Value Stream Example You Know Is Wrong

If your value stream starts with a customer order, you’ve already lost.

Most value stream examples begin with a request and end with delivery. Extend yours from first signal to proven impact, or watch competitors eat your lunch.

Last Monday, March 2, at 5 pm, I had a conversation with Al S. Brown about the challenges of solopreneurs, lone managers, and other solo chiefs. One of the action items my AI note-taker sent me an hour after the meeting was this one:

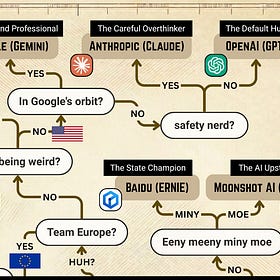

⏹️ “Describe your criteria for choosing which AI to use (e.g., integration, skills, personality vs. ambient access) and publish guidance so readers can decide which AI fits their context.”

The algorithm picked up a signal in our conversation.

On Wednesday, around 3 pm, I published the post “Which AI Should I Use?“ with a nice tongue-in-cheek flowchart depicting a decision tree and brief descriptions of fifteen leading AI models. A few hours later, at 6:22 pm, Alex responded:

Exactly two days after our meeting, the reader validated the value of my writing.

From signal to impact: 48 hours.

Common Value Stream Examples

A value stream is a way of describing the work we do to delight our customers. Toyota embraced the concept of value streams decades ago with their Toyota Production System (TPS). Since then, value stream mapping has become a foundational practice in Lean literature, and the Agile community happily adopted the term as well.

I’ve come across several definitions of value streams, and they all boil down to the same thing:

A value stream is the set of actions needed to fulfill a value proposition from a request (order) to realization (delivery).

Here are some often-cited value stream examples:

Order-to-Cash (Customer order fulfillment) — From a customer placing an order, through fulfillment and delivery, to receiving payment. This one gets labeled “Order to Cash” or “Order Fulfillment” in most textbooks.

Project Request to Completion — From a stakeholder submitting a project or change request, through planning and execution, to delivery and closure.

Procure to Pay (Procurement) — From recognizing a need to buy something, through supplier selection and ordering, to receiving goods/services and paying the invoice.

These are perfectly fine value stream examples. But the problem is that they’re too short. We should extend the concept of the value stream in both directions.

Expanding the Value Stream Left: Start with Signal, Not Request

My first issue with the traditional description is that the value stream begins with a request or order from a customer. This is way too late! If you sit around waiting for customers to place an order with your company, you’ll soon be out of business.

In the age of AI, it’s becoming easier than ever to detect trends, intent, and desire long before a customer is even ready to place an order. In fact, while you sit there with your arms folded, waiting for the next request to come in, your competitors are using AI to steer intent and to create desire (toward their product, away from yours) long before the customer even realizes they want something.

When the customer finally understands the value of what the market offers, placing an order should be nothing more than a logical next step in the value stream they’re already in. Without them even knowing it. And you want them flowing through your value stream, not your competitor’s.

If you just wait for customer orders, you’ll soon be out of business.

This means the standard value stream definition is outdated. We should extend the concept to the left to include the customer acquisition funnel all the way to the first signals in the market. The earlier you detect potential value for a customer, the better.

Expanding the Value Stream Right: End with Impact, Not Output

There’s another problem with the traditional value stream definition: it ends too early.

If you’ve been part of the agile community for a while, you’ve probably heard the stories. Product managers treat Scrum teams like feature factories. Product backlogs overflow with pointless user stories. Sprint after sprint of product deployments have never delighted a single user.

The problem is that everyone is so busy doing work and delivering output that nobody has any time left for validating outcomes and impact. Few people in the organization spend their time checking whether the busywork was worth all the trouble. The last column on Scrum and Kanban boards is always called Done. It’s never called Is Everybody Happy?

The last column on Scrum and Kanban boards is always called Done. It’s never called Happy.

Granted, I’m far from the first to point out this pervasive problem in the agile world. Many smart coaches, consultants, writers, and speakers have discussed this phenomenon at length. But few have been able to solve the problem once and for all.

Sadly, we can’t change the entire world (even though I wrote a book about it), but at least in our own work, we can take an important step by always naming the last column of our task boards Happy or Proven to prevent us from fooling ourselves into believing that our work is done. Nothing is called done as long as the user isn’t happy about the impact.

We should extend the standard value stream definition to the right, beyond output, to include the validation process all the way to impact: has anything actually changed for the better?

I’m a founder, intrapreneur, and former CIO rethinking governance for the one-person business, navigating sole accountability in the age of intelligent machines—informed by plenty of scar tissue. All posts are free, always. Paying supporters keep it that way (and get a full-color PDF of Human Robot Agent plus other monthly extras as a thank-you)—for just one café latte per month.

New Value Stream Definition

For several years now, I’ve discussed the importance of extending the concept of the value stream in both directions, and I’m unlikely to change my mind. My preferred alternative interpretation of the value stream is this:

A value stream is the set of actions needed to fulfill a value proposition from a signal (an observed behavior) to impact (a changed behavior).

Fortunately, if you dig a little further, it’s possible to find better value stream examples:

Prospect-to-Customer (Sales) — From identifying or attracting a prospect, through qualification and sales activities, to converting them into a paying customer and successfully getting them onboarded. Sometimes called “Prospect to Customer.”

Issue to Resolution (Customer service) — From a customer raising a question or problem, through investigation and handling, to confirmed resolution and feedback.

Hire-to-Retire (Employee lifecycle) — From identifying the need for a new employee, through recruitment, onboarding, and development, to exit or retirement. This is frequently described as a “Hire to Retire” value stream.

Value Stream Examples for Solo Chiefs

The Solo Chiefs among us might recognize the following examples closer to home:

Your value stream might start when you hear someone talking about Claude Cowork. It could end with the first participants finishing your brand new eight-hour online course named Claude Cowork for Creatives.

Your value stream may begin by detecting an increase in the search term “portfolio career” on Google Trends and end with the first five-star ratings on a new book that you just published, titled The Portfolio Career Professional.

Your value stream could kick off when someone tells you about the struggles of their organization. Many difficult months later, it could end with you wrapping up a successful transformation initiative, with this company recommending you as their preferred consultant.

Value doesn’t start with an order, and it doesn’t end with delivery.

Value begins with a user signal and ends with actual impact.

Jurgen, Solo Chief

P.S. What’s the last column on your task board called—and does it actually prove anyone’s happy?

Which AI Should I Use? A Guide for Solopreneurs (2026)

Benchmark scores won’t tell you which AI to use. Your personality will.

Interesting reframing, especially the extension from signal → impact. That shift alone probably removes half of the “feature factory” pathology we still see in many teams.

One thought that might strengthen the model even further: in practice these streams tend to behave less like a line and more like nested learning loops.

Between signal → output → outcome → impact, there are usually several feedback layers that need to run continuously:

• Output loop – Did we build the thing correctly?

• Implementation loop – Is it actually being used the way we expected?

• Outcome loop – Is user behavior changing?

• Impact loop – Did anything meaningful improve?

Without those loops, teams often declare “Done” while the real system dynamics remain invisible.

The same applies on the left side of the stream. Detecting signals works best when people explicitly treat them as hypotheses rather than truths. A simple pattern that has worked well in some contexts is:

S = Situation (facts)

O = Observation (pattern emerging)

H = Hypothesis (possible explanation)

N = Next step (experiment)

That turns the value stream into a continuous discovery–delivery–learning cycle, which seems especially relevant now that AI tools accelerate signal detection but also increase the risk of chasing noise.

In other words:

Signal → Impact is the span.

Learning loops are the engine that actually moves you through it.

Thanks for mentioning me in your post! It was AWESOME to see that article so soon after we talked. Customer delight can definitely come when you cut down the time between signal and value delivered.

I actually keep a Kanban board for my "work" in retirement, and the final column is actually bifurcated into two categories: "Success!" and "Abandoned". If I am not sure that the value has been delivered, I will leave the card in a "Review" column. I wait with it there to see if it delivered the value anticipated, before deciding if it is a success.

I got inspiration for the success/abandoned approach from Personal Kanban. If you don't already know Jim and Toni over at https://humanework.substack.com/ I highly recommend them to you. They do some good work.